EU AI Act Regulation 2024/1689

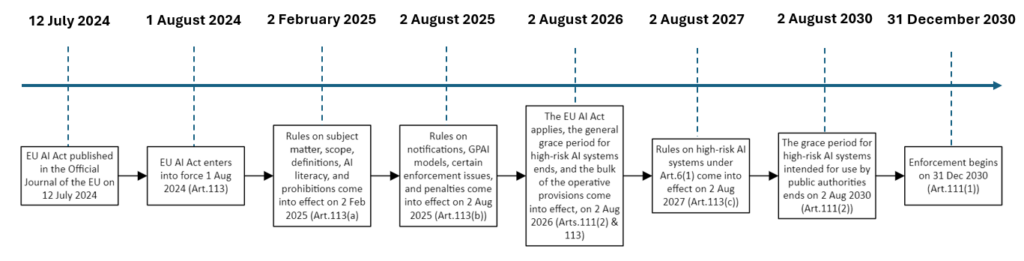

The European AI Act (Regulation 2024/1689) was officially published on July 12, 2024, in the Official Journal of the European Union. With its publication, the regulation becomes legally binding and marks a historic milestone — the world’s first comprehensive legal framework for artificial intelligence.

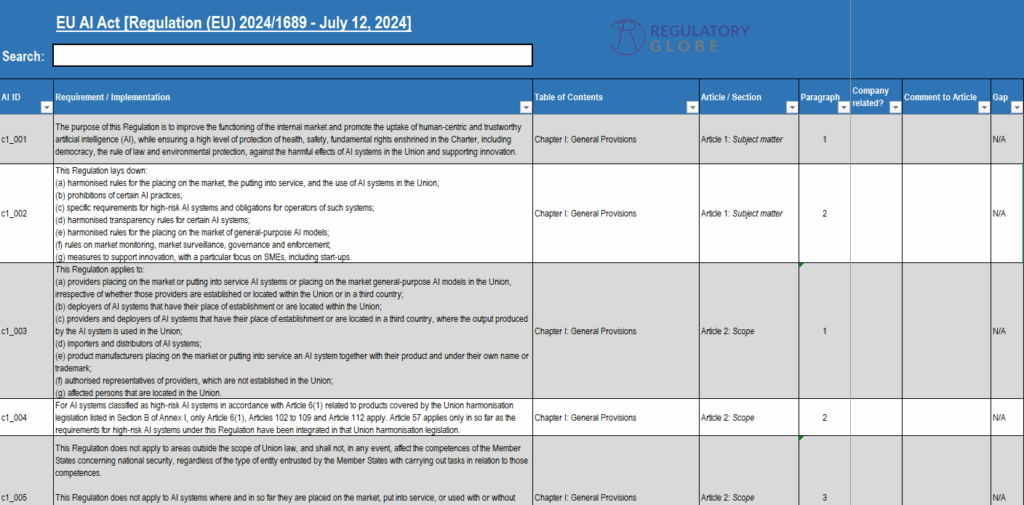

The EU AI Act aims to harmonize rules for the development, placement on the market, and use of AI systems across the EU. Its goal is to foster human-centric and trustworthy AI while ensuring a high level of protection for health, safety, and fundamental rights.

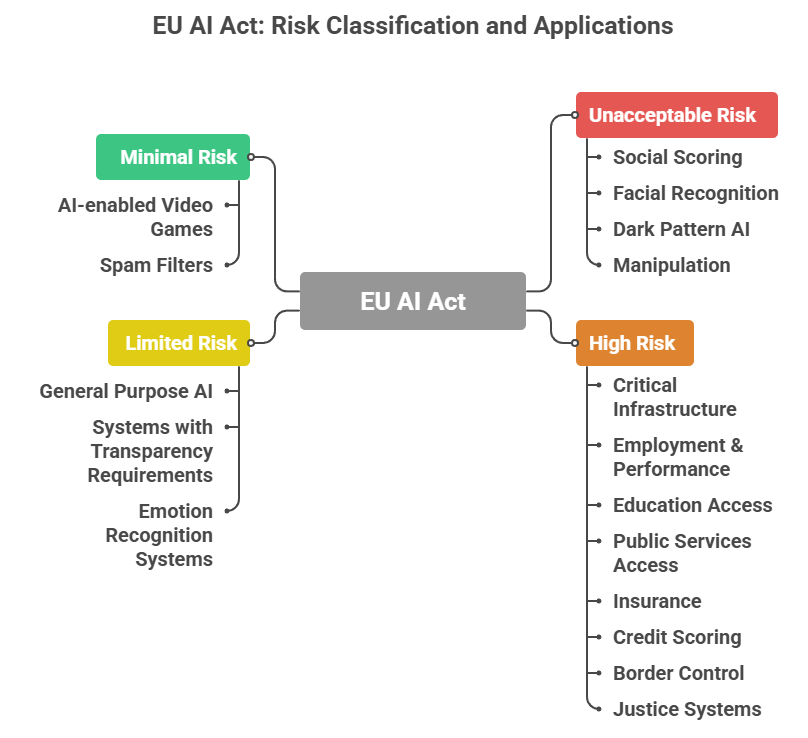

In other words, the Act sets clear boundaries for what is unacceptable, what is high-risk, and where transparency is required — creating both challenges and opportunities for AI developers and manufacturers.